The developer team is very happy to announce the release of the next version of OpenLB. The updated open-source Lattice Boltzmann (LB) code is now available for download.

Major changes include the adaptation of many existing models into the GPU-supporting operator style, a validated turbulent velocity inlet condition and a special focus on new multi phase and particle models. This is augmented by a collection of bugfixes and general usability improvements.

For the first time, the new release is also available in a new public Git repository together with all previous releases. We encourage everyone to submit contributions as merge requests and report issues there.

Core development continues within the existing private repository which is available to consortium members.

Release notes

New features and improvements

- Many existing models converted to the operator-style (“GPU support”)

- New multi phase models, interaction potentials and examples

- New Unit Converter for multi phase simulations

- New validated turbulent inlet condition Vortex Method

- New particle decomposition scheme that improves parallel performance of fully resolved particulate flow simulations using HLBM

- New boundary condition zero gradient

- Tidy up, (performance) improvements of optimization code

- Optional support for loading porosity data using OpenVDB voxel volumes

New examples

- multiComponent/airBubbleCoalescence3d

- multiComponent/waterAirflatInterface2d

- advectionDiffusionReaction/longitudinalMixing3d

- advectionDiffusionReaction/convectedPlate3d

- porousMedia/city3d

- porousMedia/resolvedRock3d

Examples with full GPU support

- turbulence/tgv3d

- turbulence/nozzle3d

- turbulence/venturi3d

- turbulence/aorta3d

- laminar/poiseuille(2,3)d

- laminar/poiseuille(2,3)dEoc

- laminar/cylinder(2,3)d

- laminar/bstep(2,3)d

- laminar/cavity(2,3)d

- laminar/cavity3dBenchmark

- laminar/testFlow3dSolver

- laminar/powerLaw2d

- laminar/cavity2dSolver

- multiComponent/fourRollMill2d

- multiComponent/rayleighTaylor3d

- multiComponent/youngLaplace3d

- multiComponent/binaryShearFlow2d

- multiComponent/microFluidics2d

- multiComponent/contactAngle(2,3)d

- multiComponent/phaseSeparation(2,3)d

- multiComponent/rayleighTaylor2d

- multiComponent/airBubbleCoalescence3d

- multiComponent/waterAirflatInterface2d

- multiComponent/youngLaplace2d

- advectionDiffusionReaction/advectionDiffusion(1,2,3)d

- advectionDiffusionReaction/convectedPlate3d

- thermal/squareCavity2d

- thermal/porousPlate(2,3)d

- thermal/squareCavity3d

- thermal/rayleighBenard(2,3)d

- porousMedia/city3d

- porousMedia/resolvedRock3d

- freeSurface/fallingDrop(2,3)d

- freeSurface/breakingDam(2,3)d

- freeSurface/rayleighInstability3d

- freeSurface/deepFallingDrop2d

Citation

If you want to cite OpenLB 1.7 you can use:

A. Kummerländer, T. Bingert, F. Bukreev, L. Czelusniak, D. Dapelo, N. Hafen, M. Heinzelmann, S. Ito, J. Jeßberger, H. Kusumaatmaja, J.E. Marquardt, M. Rennick, T. Pertzel, F. Prinz, M. Sadric, M. Schecher, S. Simonis, P. Sitter, D. Teutscher, M. Zhong, and M.J. Krause.

OpenLB Release 1.7: Open Source Lattice Boltzmann Code.

Version 1.7. Feb. 2024.

DOI: 10.5281/zenodo.10684609

General metadata is also available as a CITATION.cff file following the standard Citation File Format (CFF).

Supported Systems

OpenLB is able to utilize vectorization (AVX2/AVX-512) on x86 CPUs [1] and NVIDIA GPUs for block-local processing. CPU targets may additionally utilize OpenMP for shared memory parallelization while any communication between individual processes is performed using MPI.

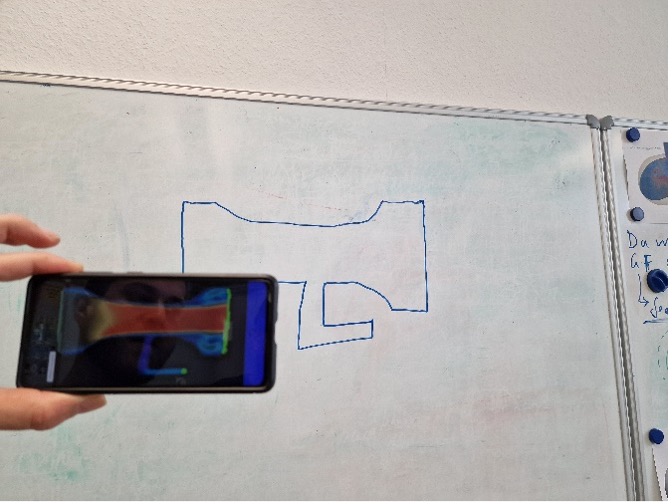

It has been successfully employed for simulations on computers ranging from low-end smartphones over multi-GPU workstations up to supercomputers and even runs in your browser.

The present release has been explicitly tested in the following environments:

- Red Hat Enterprise Linux 8.x (HoreKa, BwUniCluster2)

- NixOS 22.11, 23.11 and unstable (Nix Flake provided)

- Ubuntu 20.04 and newer

- Windows 10, 11 via WSL

- Mac OS Ventura 13.6.3

[1]: Other CPU targets are also supported, e.g. common Smartphone ARM CPUs and Apple M1/M2.